SecureClaw's AI Audit Framework: A Comprehensive Guide to Responsible and Secure AI

Artificial Intelligence (AI) is already being used in real organizations across industries - from healthcare and finance to retail and manufacturing - to improve efficiency, decision-making, and customer experience. For example, Amazon uses AI for personalized product recommendations, JPMorgan Chase applies AI for fraud detection, and Advocate Aurora Health in the U.S. deploys AI-powered imaging tools to assist doctors in diagnosing critical conditions.

AI is reshaping many industries, but its complexity introduces risks that traditional audits cannot fully address. SecureClaw's framework integrates governance, ethics, compliance, and technical safeguards - including Vulnerability Assessment and Penetration Testing (VAPT) and Static Application Security Testing (SAST) - to ensure AI systems remain secure, fair, and trustworthy.

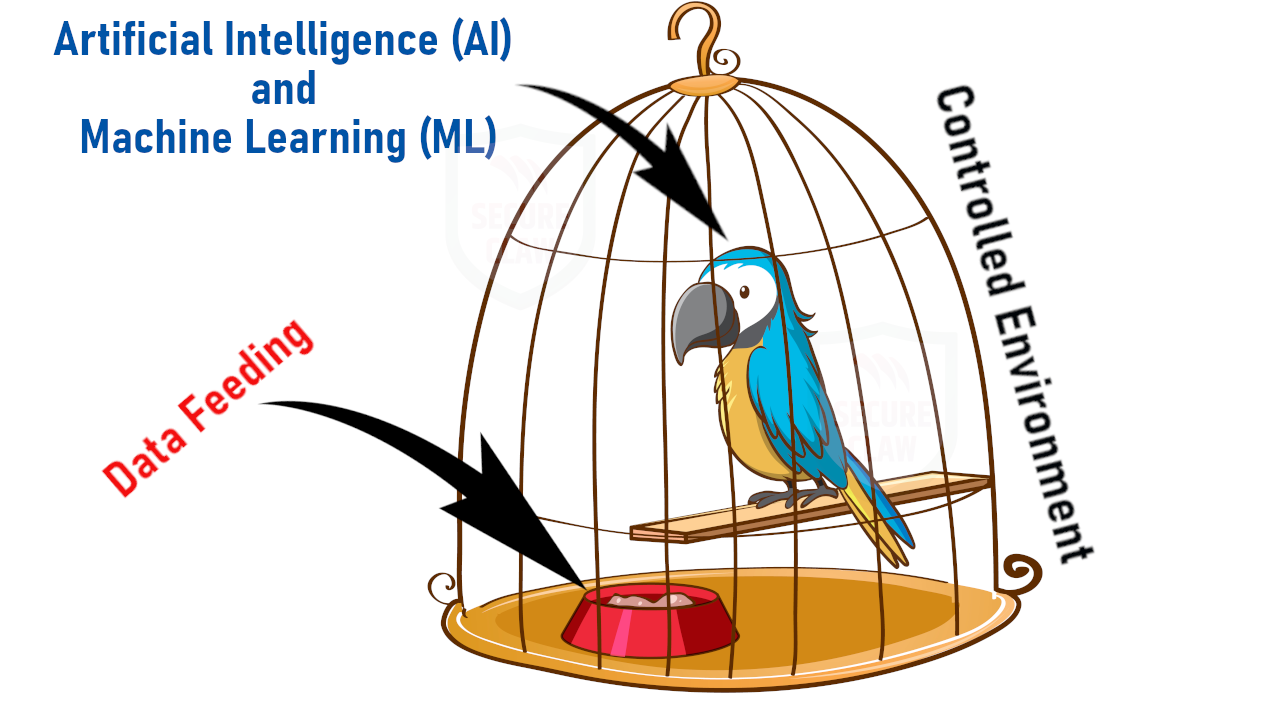

AI as a Parrot: A Security Analogy

Dr. Shekhar A. Pawar, SecureClaw, compares artificial intelligence to a parrot kept as a pet, and the analogy is striking when viewed through the lens of data privacy.

-

Learning and Repetition:

A parrot can only mimic what it has been taught. It doesn't truly understand meaning or emotions - it simply repeats data. Similarly, AI systems generate responses based on the information they are trained on. They don't "feel" or "understand" in a human sense; they process patterns.

-

Controlled Environment vs. Exposure:

If the parrot stays inside the house, its speech remains private. It may repeat family conversations, but only within a safe boundary. In the same way, an AI system confined to a secure environment (with strong access controls and encryption) can handle sensitive data responsibly.

-

Risk of Leakage:

The danger arises when the parrot leaves the house and starts repeating private conversations in public. That's equivalent to an AI system interacting outside its secure boundary - sharing or exposing sensitive information without proper safeguards. This is what we call data leakage.

-

Implication for AI Security:

Just as a responsible pet owner ensures the parrot doesn't fly out and reveal secrets, organizations must ensure AI systems are governed by strict privacy policies, secure architectures, and compliance frameworks. Otherwise, the AI could unintentionally "parrot" confidential data to external parties.

"AI has the power to transform industries, but without accountability, it risks eroding trust. SecureClaw was built on the belief that responsible innovation requires both ethical oversight and technical resilience. By embedding safeguards into every audit, we help organizations unlock AI's potential while protecting the values that matter most."

Dr. Shekhar A Pawar CEO, SecureClaw

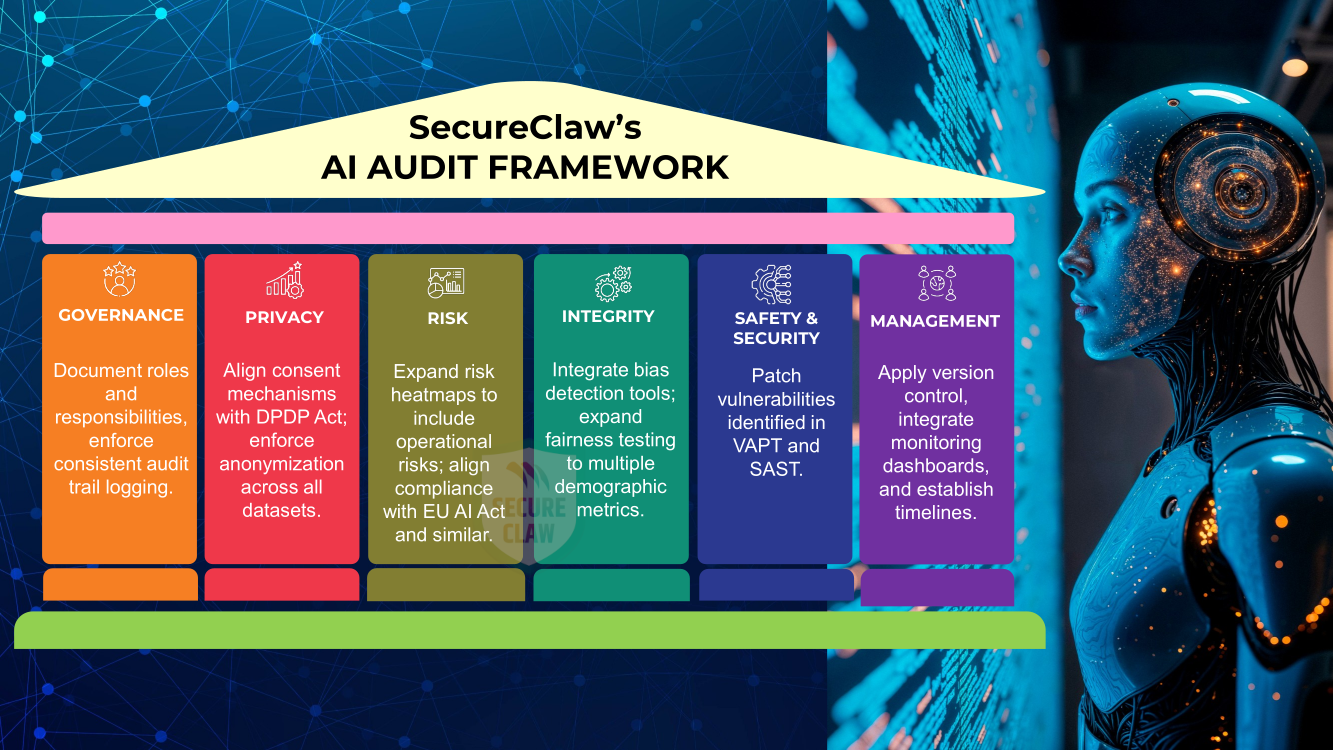

The Six Pillars of SecureClaw's Framework

At the heart of SecureClaw's AI Audit Framework lie six foundational pillars that define what responsible and secure AI should look like. Each pillar represents a critical dimension - from governance and privacy to risk, integrity, safety, and lifecycle management. Together, they form a holistic structure that not only ensures compliance with global regulations but also embeds ethical oversight and technical resilience into every stage of an AI system's journey. These pillars are the backbone of trust, guiding organizations to deploy AI with confidence, accountability, and security.

-

Governance:

Effective governance begins with a dedicated AI oversight committee that defines accountability and ensures transparency. Every model update, retraining, or deployment change must be logged in audit trails, creating a clear record of decision-making. Roles and responsibilities should be explicitly documented so that accountability is never ambiguous, and compliance obligations are consistently met.

-

Privacy:

AI systems must respect privacy by anonymizing personal data before training, thereby reducing risks of exposure. Consent mechanisms should align with global regulations such as EU GDPR, California Consumer Privacy Act (CCPA), and India's DPDP Act, ensuring individuals retain control over their information. SecureClaw emphasizes data minimization, collecting only what is strictly necessary, which reduces both ethical concerns and the potential impact of breaches.

-

Risk:

Risk management requires a structured approach. SecureClaw recommends creating risk heatmaps for each AI system to visualize vulnerabilities and prioritize mitigation. Operational risks, such as downtime or misclassification, should be documented alongside reputational risks. Compliance risks must be mapped against evolving regulations like the EU AI Act, ensuring organizations remain legally protected while deploying AI.

-

Integrity:

Integrity is about fairness, transparency, and ethical alignment. AI systems should be tested against fairness metrics to confirm that outputs treat different demographic groups equitably. Explainability must be documented so stakeholders understand how decisions are made, reducing the “black box†effect. Bias detection tools should be integrated into pipelines to identify and correct skewed training data before deployment.

-

Safety and Security:

Safety and security form the technical backbone of SecureClaw's framework. VAPT plays a critical role by simulating real-world attacks on AI APIs and endpoints, uncovering exploitable weaknesses and testing resilience against adversarial inputs. SAST complements this by scanning source code and integration pipelines for insecure coding practices, vulnerable dependencies, or risks of data leakage. Together, these safeguards ensure AI systems are hardened against both external threats and internal flaws. Beyond these, fail-safe mechanisms must be embedded into critical AI systems to default to safe states during failures, while anomaly detection continuously monitors for model drift or unusual behavior that could signal emerging risks.

-

Management:

Strong management practices ensure AI systems remain reliable over time. Version control should be applied to both models and datasets, enabling rollback if issues arise. Continuous monitoring dashboards provide real-time visibility into performance and risks, while remediation plans ensure corrective actions are documented and implemented whenever audits reveal weaknesses. This lifecycle approach transforms audits from one-time checks into ongoing assurance.

Unique Value of SecureClaw's Framework

SecureClaw's framework is distinctive because it combines ethical, regulatory, and technical safeguards into one model. By embedding VAPT and SAST into the audit methodology, it ensures resilience against cyber threats while maintaining compliance and fairness. Risk heatmaps provide visual clarity, and lifecycle monitoring guarantees that AI systems remain trustworthy long after deployment.

Recommendations for Adoption

Organizations should begin by forming a dedicated AI audit team with expertise in governance, compliance, and cybersecurity. Regular VAPT and SAST cycles must be scheduled to catch vulnerabilities early, while comprehensive documentation of model updates and retraining ensures transparency. Aligning audits with global and local regulations such as the EU AI Act and India's DPDP Act is essential, and independent third-party audits should be used for high-risk deployments to strengthen credibility.

Auditing AI in the organizations is critical because bias, privacy risks, and security vulnerabilities can undermine trust and cause harm. Frameworks like SecureClaw's - which combine governance, ethics, compliance, and technical safeguards (VAPT, SAST) - ensure that AI systems remain responsible, secure, and reliable across industries.

SecureClaw's AI Audit Framework is a future-ready model for responsible AI assurance. By combining governance rigor, ethical oversight, regulatory compliance, and technical safeguards like VAPT and SAST, SecureClaw ensures that AI systems remain secure, fair, compliant, and trustworthy throughout their lifecycle.